We’ve become accustomed to the steady annual release of new smartphones, and at the forefront of these updates is almost always the camera. That’s certainly true of the iPhone 17, which features Apple’s most advanced iPhone camera yet. Or rather “cameras” I should say; with three 48MP sensors covering ultra-wide, wide-angle and telephoto focal lengths.

The 18MP front-facing camera also now features Centre Stage technology, which automatically zooms and pans to keep faces centred in the frame, so you no longer have to awkwardly reposition your phone to fit everyone into a group shot.

Even Hollywood has embraced the iPhone’s portability and affordability. Films like 28 Years Later were shot on the previous-generation iPhone 16 Pro, and Stormzy’s acting debut Big Man showcased features such as smooth 4K 120fps slow motion.

It’s got me thinking: do I really need a professional camera anymore, or could I save thousands and rely on my iPhone? Smartphones have become remarkably powerful. Not long ago, we carried separate devices for music, calculators, portable TVs, GPS, dictaphones, wallets, torches – you name it. Now smartphones really have become a Swiss Army Knife of powerful tech that fits right in your pocket.

Both the iPhone 17 and 17 Pro use sensors with 48MP, which, on paper, is a higher resolution than my professional full-frame Canon EOS R5 at 45MP. The key difference lies in how that resolution is achieved.

An iPhone has three cameras with individual lenses covering ultra-wide angle to telephoto, with Apple’s clever software making it easy to switch between them. On a DSLR or mirrorless camera, it’s less convenient. You either have to carry around and switch lenses, or stick with one do-it-all superzoom, which may come with the caveat of a more restrictive aperture selection or lesser image quality.

That said, the creative freedom of an interchangeable-lens system far outweighs the slick lens switching of the iPhone. Plus, for my RF system, there are over 40 lenses and more than 200 older EF lenses which can be adapted.

The iPhone 17’s resolution sounds impressive and, on paper, is higher than the 45MP of my full-frame camera. But physics tells a different story. When you cram more pixels onto a smaller sensor, each pixel – “photosite” – has to be smaller.

Think of these photosites like buckets collecting light. On a larger sensor, the buckets are bigger, so they can gather more light and produce a cleaner, more detailed signal. On a smaller sensor, the buckets are tiny; they fill up more quickly and capture less light overall.

Of course, Apple’s software and AI can go some way to cleaning up these defects and overcoming the physical limitations. In my experience, iPhone photos can look incredible when viewed from the small screen of a smartphone or tablet, but blow them up to A4, A3 or larger, and you can really see the difference compared to a full-frame camera.

AI correction doesn’t stop there. iPhones, and smartphones in general, also have much smaller sensors than full-frame and even APS-C – some 12-18 times smaller. By nature, these smaller sensors cannot achieve a true shallow depth of field. But Apple (and Google and Samsung – they all have their own versions) uses software-based portrait modes that artificially blur the background. I’ve experienced mixed results with modes such as these; they can be inconsistent, especially around fine details like hair or complex edges. Larger sensors, such as APS-C or full-frame, achieve this effect optically and more reliably. That said, smartphone portrait modes are getting better with every generation.

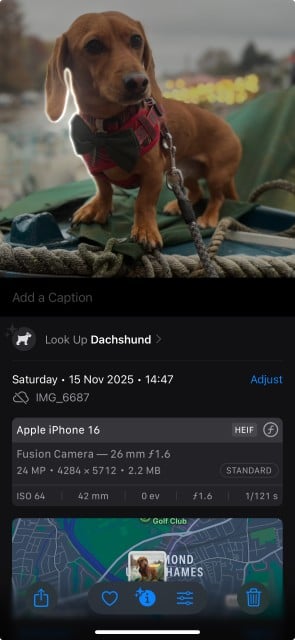

Of course, iPhones have other advantages that my big full-frame camera does not, like instant sharing to social media, which is very handy, and Visual Look Up, which uses AI to scan photos and tell you more about the subjects it detects within the frame – this blew my mind the first time I used it.

But ultimately, they lack too many important DSLR or mirrorless camera features, such as fine exposure and shutter speed control. And while some iPhones do have the ability to capture RAW files, it’s not as practical as a pro camera setup with dual card backup, nor will those RAW files have the greater dynamic range my Canon EOS R5 captures.

So, can the iPhone take brilliant photos, and is it the camera that I always have with me? Yes.

But I need consistency and reliability across all lighting conditions, along with the flexibility to switch lenses for a different outcome, all of which are absolutely essential when handing over deliverables to paying clients.

Not to mention, I think I’d get kicked out if I turned up to shoot a wedding and whipped out my smartphone!

The Wex Blog

Sign up for our newsletter today!

- Subscribe for exclusive discounts and special offers

- Receive our monthly content roundups

- Get the latest news and know-how from our experts